GECCO2020 Competition on Evolutionary Multi-task Optimization | |||||

|

|||||

|---|---|---|---|---|---|

RESULTS:

OVERVIEW AND AIMThe original inspiration of artificial intelligence (AI) was to build autonomous systems that were capable of demonstrating human-like behaviours within certain application areas. However, given the present-day data deluge, rapid increase in computational resources, and key improvements in machine learning algorithms, modern AI systems have begun to far exceed humanly achievable performance across a variety of domains. Some well known examples of this reality include IBM Watson winning Jeopardy!, and Google DeepMind’s AlphaGo beating the world’s leading Go player. Given such advances, it is deemed that what we foresee for AI in the future need no longer be limited to an anthropomorphic vision. Indeed, it may be more meaningful to build AI systems that complement and augment human intelligence, excelling at those tasks for which humans are ill-equipped. In this regard, one of the long-standing goals of AI has been to effectively multitask; i.e., learning to solve many tasks at the same time [1]. It is worth noting that although humans are generally unable to tackle multiple problems simultaneously, or within short timespans – as interleaving more than one task usually entails a considerable switching cost during which the brain must readjust from one to the other – machines are largely free from such computational bottlenecks. Thus, not only can machines move more fluidly between tasks, but, when related tasks are bundled together, it is also possible for them to seamlessly transfer data (encapsulating some problem-solving knowledge) among them. As a result, while an AI attempts to solve a complex task, several other simpler ones may be unintentionally solved. Moreover, the knowledge learned unintentionally can then be harnessed for intentional use. In line with the above, evolutionary multitasking is an emerging concept in computational intelligence that realises the theme of efficient multi-task problem-solving in the domain of numerical optimization [2-5]. It is worth noting that in the natural world, the process of evolution has, in a single run, successfully produced diverse living organisms that are skilled at survival in a variety of ecological niches. In other words, the process of evolution can itself be thought of as a massive multi-task engine where each niche forms a task in an otherwise complex multifaceted fitness landscape, and the population of all living organisms is simultaneously evolving to survive in one niche or the other. Interestingly, it may happen that the genetic material evolved for one task is effective for another as well, in which case the scope for inter-task genetic transfers facilitates frequent leaps in the evolutionary progression towards superior individuals. Being nature-inspired optimisation procedures, it has recently been shown that evolutionary algorithms (EAs) are not only equipped to mimic Darwinian principles of “survival-of-the-fittest”, but their reproduction operators are also capable of inducing the afore-stated inter-task genetic transfers in multitask optimisation settings; although, the practical implications of the latter are yet to be fully studied and exploited in the literature. The potential efficacy of this new perspective, as opposed to traditional approaches of solving each optimisation problem in isolation, has nevertheless been unveiled by so-called multi-factorial EAs (MFEAs). Evolutionary multitasking opens up new horizons for researchers in the field of evolutionary computation. It provides a promising means to deal with the ever-increasing number, variety and complexity of optimisation tasks. More importantly, rapid advances in cloud computing could eventually turn optimisation into an on-demand service hosted on the cloud. In such a case, a variety of optimisation tasks would be simultaneously executed by the service enginewhere evolutionary multitasking may harness the underlying synergy between multiple tasks to provide consumers with faster and better solutions. TEST SUITESSingle-objective and multi-objective continuous optimization have been intensively studied in the community of evolutionary optimization where many well-known test suites are available. As a preliminary attempt, we have designed two MTO test suites [6],[7] for single-objective and multi-objective continuous optimization tasks, respectively. The test suite for multi-task single-objective optimization (MTSOO) contains ten 50-task MTO benchmark problems. Each of the 50-task MTO problem contains 50 single-objective continuous optimization tasks, which bear certain commonality and complementarity in terms of the global optimum and the fitness landscape. These MTO problems possess different degrees of latent synergy between their involved component tasks. The test suite for multi-task multi-objective optimization (MTMOO) includes ten 50-task MTO benchmark problems. Each of the 50-task MTO problem contains 50 multi-objective continuous optimization tasks, which bear certain commonality and complementarity in terms of the Pareto optimal solutions and the fitness landscape. The MTO problems feature different degrees of latent synergy among their involved 50 component tasks. All benchmark problems included in these two test suites are developed based on the mechanisms presented in technical reports [6] and [7], respectively. The specific benchmarks can be downloadable here. All benchmark problems included in these two test suites will be released soon. COMPETITION PROTOCOLPotential participants in this competition may target at either or both of MTSOO and MTMOO while using all benchmark problems in the corresponding test suites as described above for performance evaluation. For MTSOO test suite:(1) Experimental settingsFor each of 10 benchmark problems in this test suite, an algorithm is required to be executed for 30 runs where each run should employ different random seeds for the pseudorandom number generator(s) used in the algorithm. Note: It is prohibited to execute multiple 30 runs and deliberately pick up the best one. For all the benchmark problems, the maximal number of function evaluations (maxFEs) used to terminate an algorithm in a run is set to 5,000,000. In the multitasking scenario, one function evaluation means calculation of the objective function value of any component task without distinguishing different tasks. Note: The parameter setting of an algorithm is required to be identical for each benchmark problem in this test suite, respectively. Participants are required to report the used parameter setting for each problem in the final submission to the competition. Please refer to “SUBMISSION GUIDELINE” for more details. (2) Intermediate results required to be recordedWhen an algorithm is executed to solve a specific benchmark problem in a run, the so far achieved best function error value (BFEV) w.r.t. each component task of this problem should be recorded when the current number of function evaluations reaches any of the predefined values which are set to k*maxFEs/1000, k =1, …, 1000 in this competition. BFEV is calculated as the difference between the best objective function value achieved so far andthe globally optimal objective function value known in advance. As a result, for any benchmark problem, 1000 BFEVs would be recorded w.r.t. each component task in each run. Intermediate results for each benchmark problem are required to be saved separately into ten “.txt” files named as “MTSOO_P1.txt”, …, “MTSOO_P10.txt” where the data contained in each “.txt” file must conform to the following format:

where BFEV_{j,k}^i (i = 1, ..., 50; j = 1, …, 30; k = 1, …, 1000) stands for the BFEV w.r.t. the ith

component task obtained in the jth run at the kth predefined number of function evaluations.

The first column stores the predefined numbers of function evaluations at which intermediate

results are recorded. The subsequent columns store intermediate results for each of 30 runs

with each run occupying 50 consecutive columns w.r.t. 50 component tasks, respectively.

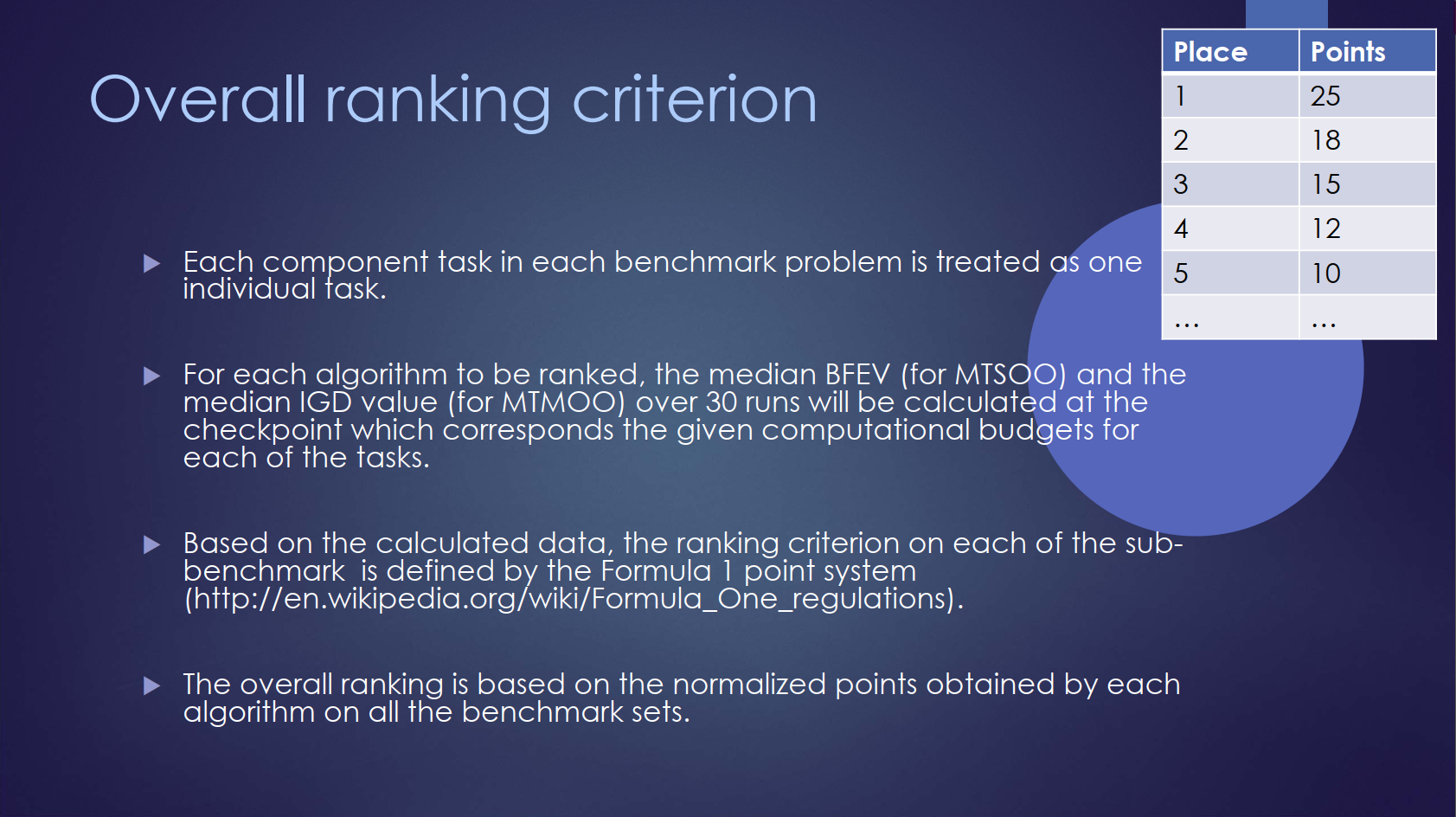

(3) Overall ranking criterionTo derive the overall ranking for each algorithm participating in the competition, we will take into account of the performance of an algorithm on each component task in each benchmark problem under varying computational budgets from small to large. Specifically, we will treat each component task in each benchmark problem as one individual task, ending up with a total of 500 individual tasks. For each algorithm to be ranked, the median BFEV over 30 runs will be calculated at each checkpoint which corresponds to different computational budgets for each of 500 individual tasks. Based on these calculated data, the overall ranking criterion will be defined. To avoid deliberate calibration of the algorithm to cater for the overall ranking criterion, we will release the formulation of the overall ranking criterion after the competition submission deadline. For MTMOO test suite:(1) Experimental settingsFor each of 10 benchmark problems in this test suite, an algorithm is required to be executed for 30 runs where each run should employ different random seeds for the pseudorandom number generator(s) used in the algorithm. Note: It is prohibited to execute multiple 30 runs and deliberately pick up the best one. For all the benchmark problems, the maximal number of function evaluations (maxFEs) used to terminate an algorithm in a run is set to 5,000,000. In the multitasking scenario, one function evaluation means calculation of the objective function value of any component task without distinguishing different tasks. Note: The parameter setting of an algorithm is required to be identical for each benchmark problem in this test suite, respectively. Participants are required to report the used parametersetting for each problem in the final submission to the competition. Please refer to “SUBMISSION GUIDELINE” for more details. (2) Intermediate results required to be recordedWhen an algorithm is executed to solve a specific benchmark problem in a run, the obtained inverted generational distance (IGD) value w.r.t. each component task of this problem should be recorded when the current number of function evaluations reaches any of the predefined values which are set to k*maxFEs/1000, k =1, …, 1000 in this competition. IGD [8] is a commonly used performance metric in multi-objective optimization to evaluate the quality (convergence and diversity) of the currently obtained Pareto front by comparing it to the optimal Pareto front known in advance. As a result, for any benchmark problem, 1000 IGD values would be recorded w.r.t. each component task in each run. Intermediate results for each benchmark problem are required to be saved separately into ten “.txt” files named as “MTMOO_P1.txt”, …, “MTMOO_P10.txt” where the data contained in each “.txt” file must conform to the following format:

where IGD_{j,k}^i (i = 1, …, n ;j = 1, …, 30; k = 1, …, m) stands for the IGD value w.r.t. the ith component task obtained in the jth run at the kth predefined number of function evaluations. Note: n=50 and m=1000 for 50-task benchmark problems.

(3) Overall ranking criterionTo derive the overall ranking for each algorithm participating in the competition, we will take into account of the performance of an algorithm on each component task in each benchmark problem under varying computational budgets from small to large. Specifically, we will treat each component task in each benchmark problem as one individual task, ending up with a total of 500 individual tasks. For each algorithm compared for ranking, the median IGD value over 30 runs will be calculated at each checkpoint corresponding to different computational budgets for each of 500 individual tasks. Based on these calculated data, the overall ranking criterion will be defined. To avoid deliberate calibration of the algorithm to cater for the overall ranking criterion, we will release the formulation of the overall ranking criterion after the competition submission deadline. SUBMISSION GUIDELINE

please archive the following files into a single .zip file and then send it to

mtocompetition@gmail.com before the competition submission deadline (

Here, "param_SO.txt" and "param_MO.txt" contain the parameter setting of the algorithm for MTSOO and MTMOO test suites, respectively. "code.zip" contains the source code of the algorithm which should allow the generation of reproducible results. If you would like to participate in the competition, please kindly inform us about your interest via email (mtocompetition@gmail.com) so that we can update you about any bug fixings and/or the extension of the deadline. COMPETITION ORGANIZERSLiang Feng Kai Qin Abhishek Gupta Yuan Yuan Eric Scott Yew-Soon Ong Xu Chi REFERENCES

[1] R. Caruana, “Multitask learning”, Machine Learning, 28(1): 41-75, 1997. |

|||||